Technical SEO – 5 Step Checklist

As the saying goes, “Content is King”.

While it’s true that scintillating content is key to enhancing your website’s reception and reputation, you may find your traffic numbers suffering if there are some technical issues behind the scenes.

During the early years of search, marketers and developers focused on their tasks without bothering to learn about what lay at the other end of the fence.

Today, things are different. Marketers need to have an understanding of all the various technical concepts. At the same time, developers also need to know the ins and outs of digital marketing.

An understanding of both technical and creative aspects is needed.

These traits are what make on an effective digital marketer. Once you’re able to recognise the technical faults in a system and all the right buttons to press in case calamity strikes, you have better odds of seeing your website take off.

In a bid to enlighten you on all the key points you need to be conversant with, we’ve prepared this technical SEO checklist.

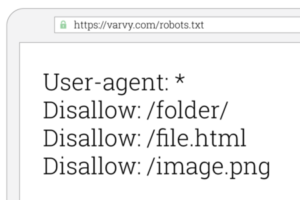

1. Robots.txt File

To check the robots.txt file, you need to follow:

Google Search Console> Crawl> robots.txt Tester

This robots.txt play an important role. It provides a directive to search engines and all websites need to have one somewhere in their root directory.

For proper functioning, you need to ensure that it is properly formatted. This means that it should only restrict files or directories that you don’t want to see indexed. While at it, ensure that it is included in your XML sitemap layout.

If you’d love to learn more about the workings of the robot.txt file, you can acquaint yourself with some Google recommendations.

Having been in the industry for a while now, one of the errors we’ve encountered one, two, many times usually happens during a website redesign. The dev site frequently gets blocked in robots.txt after the disallow:/ command is used. To avert this and avoid taking your website out of the index, you need to ensure that the disallow rule is done away before your launch the redesigned platform.

2. Canonical Link Elements

It’s not uncommon for website owners to come up with different URLs that come up with similar content. Since this is not a good thing, you can make use of canonical link elements to ensure that a website remains unique.

Often times, canonical link elements are used to ensure that the preferred version of a page remains indexed at all times.

When working with them, there are some guidelines that you need to follow. Ideally, you want them to reference a URL that does not redirect and is indexed. At the same time, the URL also needs to be in the full path, something like http://www.example.com/

A canonical tag that fits this would be”

<link rel=”canonical” href= http://www.example.com/product.php?item=foo987/>

To check this, you can use a platform like Screaming Frog.

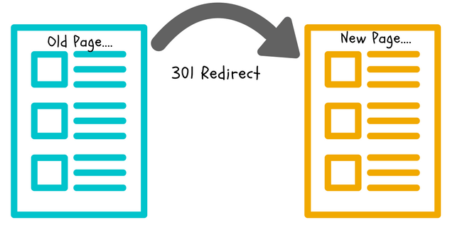

3. Redirects

With redirects, you can effectively let search engines know that your webpage has permanently relocated to a new location. This comes in handy when there’s a URL change or if a page is deleted.

While there are different types of redirects, we favour a 301 redirect because of SEO purposes. This usually notifies search engines that a page has permanently shifted house.

The best way to handle redirects is by having them redirect to the final destination. Having looping redirect chains is not advisable, if possible, ensure that you minimise the number of redirects.

You can use Screaming Frog and redirect-checker.org to check.

4. Duplicate Content

According to Google, duplicate content is that which can be replicated on your website or on various other web domains.

Whenever pages are duplicated, Google automatically filters such content away from search results. By doing so, they ensure that only distinct information is visible.

Having plenty of duplicate content can harm your organic traffic. Since most instances of duplicate content are unintentional, you can try to create a sense of originality by using canonical link elements.

To verify the status of your website, you can use a platform like siteliner.com.

5. Mobile-Friendliness

In contemporary times, it’s crucially important for websites to consider the mobile-friendliness aspect. This becomes all the more necessary once you factor in Google’s mobile-first index.

For the smooth running of operations, you want visitors to your website to have a great experience irrespective of the device they are checking out the content from.